AI-Powered Linguistic Tool

In this project, we're creating three super important solutions for a language tech company. First, an intelligent question-answering system to automate responses. Second, a smart scraper with advanced DOM analysis

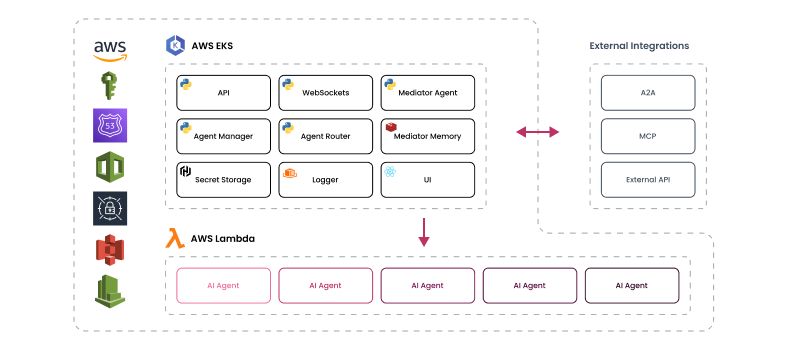

Behind the interface is a scalable generative AI platform designed for AI agent orchestration and agent orchestration frameworks. Enterprise AI systems gain flexibility to connect AI assistants, integrate external services, and process real-time data from multiple data sources.

Generative AI, Enterprise Artificial Intelligence

USA, Europe

Creating a unified generative AI ecosystem where AI agents, assistants, and applications collaborate across multiple AI models.

Full-cycle generative AI platform development focused on AI agent orchestration, model context protocol, MCP architecture, and a custom workflow builder.

10 specialists

3.5 months, ongoing (6+ months MVP phase)

faster workflow creation through agent orchestration frameworks

reduction in manual coordination between AI agents

major large language models unified through MCP servers

higher stability in complex AI systems powered by agentic AI orchestration

The goal was not simply to build a chatbot. The ambition was to develop a scalable AI system where autonomous AI agents coordinate complex workflows using agent orchestration and model context protocol, the MCP architecture.

Traditional AI applications often rely on previous technologies, such as static integrations or isolated AI tools. These approaches struggle to support complex workflows, customer interactions, and real-time data access from multiple external data sources.

To unlock business value, the platform needed a new orchestration approach capable of managing AI agent capabilities, tool usage, and function calling while maintaining human oversight and strong risk management.

Our team joined during the early stages of product development. Architectural decisions centered on the Model Context Protocol (MCP), an open protocol designed to connect AI assistants with external systems and tools.

Instead of building isolated integrations, the architecture relied on MCP hosts, MCP servers, and MCP clients communicating through a transport layer with server-sent events and asynchronous messaging.

This standardized protocol enabled AI agents to retrieve relevant information, access local resources, and interact with remote resources in a plug-and-play environment, much like a USB-C port that connects multiple devices through a single interface.

The platform evolved into a modular agentic AI environment where AI assistants, AI agents, and AI tools collaborate through agent orchestration frameworks.

The architecture also supports fine-grained control over tool permissions, data access, and agent performance, ensuring safe interaction between AI systems and enterprise environments while enabling more reliable AI-assisted decision-making in business processes.

Early versions relied on loosely connected agents, which made complex flows unpredictable. Strengthening AI agent orchestration and structured multi-agent communication has become essential to building a reliable execution environment.

Static execution chains limited experimentation. A need emerged for a custom AI workflow builder that would allow teams to customize agent workflows without rewriting core logic.

Supporting multiple providers required deeper LLM integration services. True Generative AI for agent orchestration demanded seamless switching between models while maintaining stable execution.

As adoption grew, scalable infrastructure and flexible AI deployment options became critical. The platform had to support both cloud and self-hosted environments to meet enterprise AI expectations.

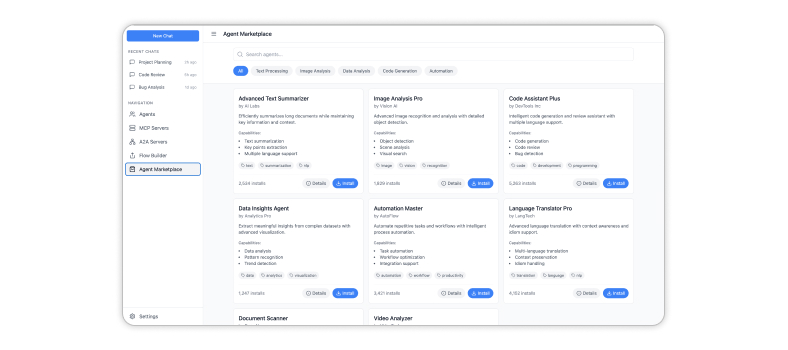

To encourage community growth, the solution needed extensibility. Enabling reusable agents and tool publishing positioned the platform for long-term modernization and AI marketplace expansion.

The architecture was designed around the Model Context Protocol MCP to create a stable orchestration environment for AI agents. Our goal was to enable AI assistants to access external systems, data sources, and remote resources using function calling while maintaining human oversight and security controls.

A foundational communication library was created to support structured tool invocation and stable multi-agent communication between autonomous AI agents operating inside the platform.

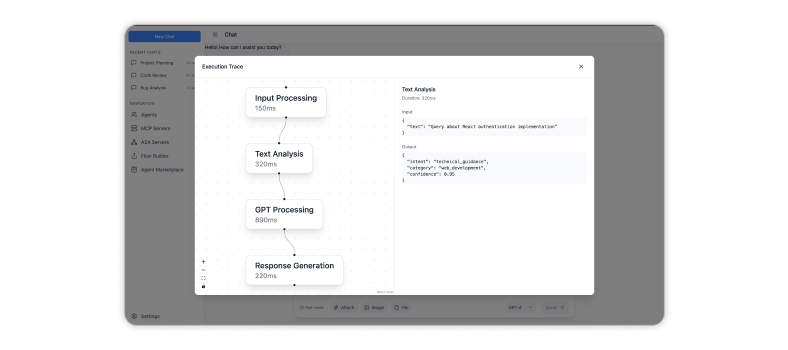

A protocol-driven execution engine was developed with support for MCP, Model Context Protocol, A2A, and internal standards, enabling consistent AI agent orchestration across complex workflows.

A modular custom AI workflow builder allowed users to design and customize agent workflows, combine tools, and create reusable logic for different execution scenarios.

Advanced LLM integration services enabled dynamic provider switching, fallback handling, and guided execution to maintain reliability across multiple models.

Web, CLI, and early desktop interfaces were introduced to ensure accessible orchestration while maintaining a unified execution core.

Cloud-based pipelines and billing logic were implemented to support scalable infrastructure, enterprise AI deployment readiness, and future self-hosted environments.

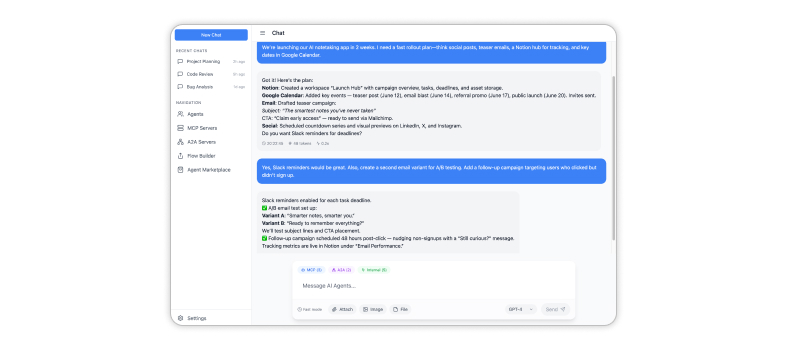

An interactive interface powered by AI agents, AI assistants, and natural language processing to support customer interactions.

A visual tool enabling users to design AI workflows, integrate external tools, and automate repetitive tasks.

Execution layer implementing Model Context Protocol MCP architecture for reliable agent orchestration.

A marketplace where software developers publish reusable AI tools, AI assistants, and AI applications.

Web application, CLI interface, and integrations with development environments that can also host custom AI chatbot solutions for businesses.

Infrastructure supporting enterprise AI deployment, security monitoring, and scalable AI systems that are suitable for highly regulated domains.

The introduction of a custom AI workflow builder accelerated execution across teams. Users can now customize agent workflows and deploy new logic significantly faster.

Structured AI agent orchestration reduced friction between autonomous AI agents and improved multi-agent communication, making complex processes easier to manage at scale.

Advanced LLM integration services enabled seamless use of multiple models within a single platform, strengthening enterprise AI flexibility and supporting long-term AI development goals.

Protocol-driven execution based on MCP and Model Context Protocol improved reliability across multi-agent communication flows and reduced operational uncertainty.

The solution supports flexible AI deployment and scalable infrastructure, preparing the platform for enterprise growth and marketplace expansion.

Reusable workflows and extensible orchestration capabilities transformed the solution into a scalable generative AI environment ready for monetization and expansion.

Companies planning to develop a generative AI platform or seeking enterprise AI orchestration solutions can rely on CHI Software as a long-term technology partner.

In this project, we're creating three super important solutions for a language tech company. First, an intelligent question-answering system to automate responses. Second, a smart scraper with advanced DOM analysis

Cutting-edge AI indoor navigation system for construction: suggesting GPS alternatives for precise movements inside buildings.

This project is an in-house initiative by CHI Software, designed in response to the company's objective of enhancing the visitor experience on the corporate website through the integration of an

About cookies on this site

We use cookies to give you a more personalised and efficient online experience. Read more. Cookies allow us to monitor site usage and performance, provide more relevant content, and develop new products. You can accept these cookies by clicking “Accept” or reject them by clicking “Reject”. For more information, please visit our Privacy Notice