AI Assistant Integration for an ERP System

We kicked off a project to create an AI assistant to make the client's ERP system even better. Our goal was to develop a text-based Q&A AI assistant that users

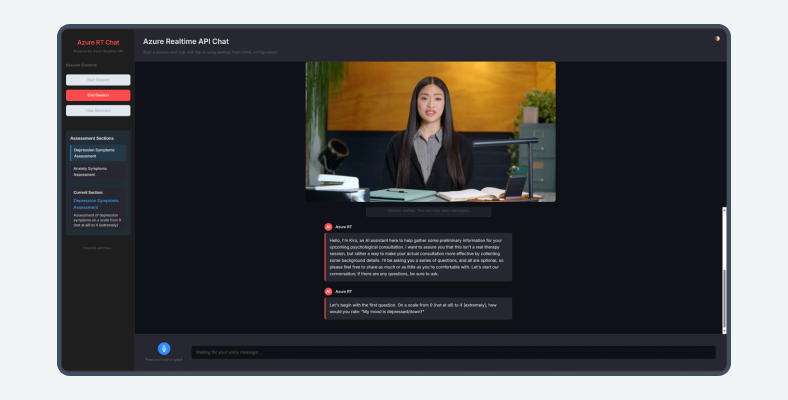

A German research institute partnered with CHI Software to develop AI-based psychological assessment software powered by LLM agents, real-time avatars, and multi-level validation. The result: a scalable, safe, and interactive platform that transforms traditional diagnostics into guided conversational experiences

Education and psychology

Germany

Turn research in diagnostics and coaching into a scalable conversational platform with a virtual avatar and strict safety rules.

Prototype of an intelligent avatar-driven assessment system that combines LLM agents, a proprietary knowledge base, and multi-level validation.

4 specialists

12 months, ongoing

reduction in latency in avatar conversations, under 500 ms in tests

increase in session duration during pilot use

proprietary content coverage with zero hallucinations in validated flows

аrchitecture ready for new agents, new languages, and extended validation

CHALLENGE

The institute had years of experience in their field, with proven methods in diagnostics and coaching, but they had no digital experience that felt like it could match their level of expertise. Earlier versions of their tech tools were often long, tedious forms, with static questions and high drop-off rates. A new approach to psychological assessment software was needed that allowed people to express themselves naturally while remaining within strict medical and ethical boundaries.

ENGAGEMENT STAGE

The client wanted to have an AI-powered visual representation that gives a digital face to a chatbot by making automated conversations more engaging. CHI Software joined when this concept of an avatar guiding users through an AI psychological assessment was clear, but the technology architecture was not. Joint workshops with psychologists mapped real conversations, escalation paths, and questionnaire flows. From there, the teams agreed to build a controlled psychological assessment tool with agents and validation while leaving room for future growth.

TRANSFORMATION

Step by step, experiments began taking shape as a working prototype of an AI-based psychological assessment with a live avatar. Users can now speak or type their answers, and the avatar will respond in under half a second, while still managing to run every answer through knowledge checks and validation before it appears on screen. That’s the power of combining AI language models, which can process millions of words per second, with an authoritative scientific knowledge.

Experts can also review conversations, fine-tune the rules, and plan new scenarios, which helped to flesh out the prototype as the foundation for long-term development.

For readers looking for a broader view on how knowledge-based assistants work in practice, we’ve explained the concept in more detail in our article “Knowledge Base Chatbots: Benefits, Use Cases & Integration Tips.”

SERVICES PROVIDED

DEVELOPMENT TEAM

LATENCY AND CONVERSATION FLOW

The institute wanted the avatar to feel present in the room. Long pauses between questions and answers destroyed immersion. A core part of AI in psychological assessment here was simple: keep the delay so small that users forget they are talking to a machine.

KNOWLEDGE GROUNDING AND SAFETY

Generic models often improvise. For psychological and medical contexts, improvisation is not acceptable. The team needed a psychological safety assessment tool that relied solely on vetted materials and kept a clear record of why each answer appeared.

VALIDATION AND ESCALATION

Some answers must pass to a human expert. The system needed a structured path where a psychological assessment AI agent could raise a flag, record context, and hand off the case for professional review.

SCALABILITY AND EXTENSIBILITY

The institute planned new languages, new assessment scenarios, and new partner organizations. Architecture had to make future evolution natural, not painful, especially for long-term psychological assessment software and coaching programs.

STRATEGY

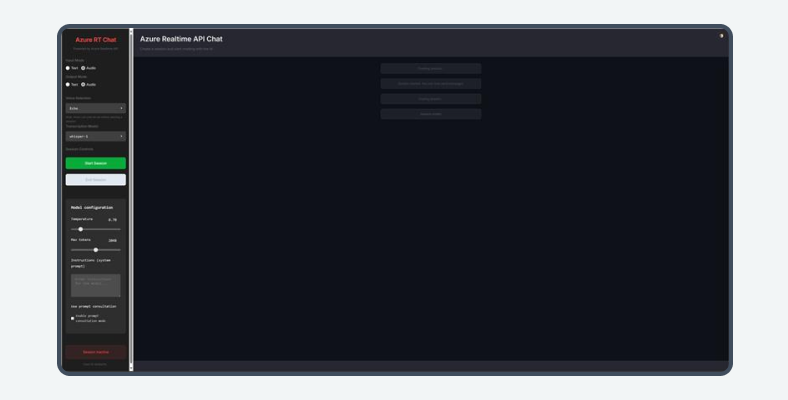

The work focused on a safe, flexible foundation. The client wanted an expressive avatar on the surface and a reliable engine behind it. The team developed a layered psychological assessment software architecture where conversation, reasoning, and validation stay independent but closely connected, so the prototype can grow into future products and full-scale development.

DEVELOPMENT PHASES

Real assessment and coaching sessions were analysed, typical dialogue patterns were mapped, and flows were documented. On this basis, the first modular psychological assessment tool design appeared with separate agents for intent, knowledge, validation, and handover.

Engineers built a real-time communication layer with OpenAI Realtime API, WebRTC, and WebSockets. Custom face and body animation models allowed the avatar to react naturally, and response time in tests stayed under 500 ms, which made it suitable for live AI psychological assessment sessions.

A network of agents on LangChain and LangGraph took responsibility for context control. One agent handled intent, another retrieved knowledge, and another checked consistency with earlier answers. Together, they formed a controllable psychological assessment AI agent environment that can be extended over time.

A configurable engine for questionnaires and assessments was introduced. Psychologists define flows, scoring, and branching rules without changing backend code. The avatar guides users through forms, explains complex terms, and checks completeness, turning the prototype into a practical assessment platform.

Validation rules and expert review steps were added above the conversation layer. Answers pass several checks before reaching the user, while interaction logs feed analytics dashboards. Psychologists can see how the system behaves, adjust rules, and plan the next stages of behavioral health assessment software development.

Users talk to a virtual avatar that listens and responds in real time, keeping the dialogue flowing like a live consultation. This makes the AI psychological assessment feel more natural and less stressful.

The system walks users through medical and psychological forms, explains difficult terms, and checks for missing answers so that the AI-based psychological assessment becomes a supported process, not a cold and clinical checklist.

Every reply relies on the institute’s own methods and content, so the psychological assessment software stays consistent with validated practice instead of improvising.

Psychologists configure question paths, scoring, and feedback without code changes, which makes it easy to create new scenarios for training or evaluation.

Built-in rules, validation checks, and clear handover paths make sure sensitive cases reach human specialists when needed.

Interaction data highlights where users hesitate, which explanations help most, and how engagement changes over time, supporting continuous improvement of the service.

80% LATENCY REDUCTION IN SESSIONS

Average response time dropped below 500 ms, so conversations with the avatar feel natural and uninterrupted during live AI psychological assessment sessions.

65% LONGER AND MORE FOCUSED USER SESSIONS

Pilot users stayed in conversations longer, asked more follow-up questions, and fully completed assessment flows more often than with previous tools.

ZERO HALLUCINATIONS IN VALIDATED FLOWS

All of the avatar’s replies in tested scenarios stayed within proprietary content and rules, which increased trust in the psychological assessment software among psychologists and medical reviewers.

STRONGER BASIS FOR CLINICAL AND TRAINING USE

Multi-level validation, audit-friendly logs, and expert review options created a stable foundation for future certification, wider rollout, and broader behavioral health assessment software development.

ARCHITECTURE READY FOR NEW AGENTS AND LANGUAGES

Platform design supports the addition of new agents, languages, and extra validation steps without major refactoring, so further behavioral health assessment software development stays predictable and manageable.

Companies that are looking to upgrade their psychological assessment software – or create one from the ground up – can rely on CHI Software’s experience working with avatars, agents, and safety-focused AI. Our team is confident and experienced in turning research-based methods into working products that real users trust and that experts can maintain closer control over.

WHAT WE SPECIALIZE IN

BUSINESS WE SUPPORT

We kicked off a project to create an AI assistant to make the client's ERP system even better. Our goal was to develop a text-based Q&A AI assistant that users

AI-driven digital assessment platform enhancing personalization, automation, and scalability for global education providers through intuitive UX and advanced analytics.

In this project, we're creating three super important solutions for a language tech company. First, an intelligent question-answering system to automate responses. Second, a smart scraper with advanced DOM analysis

About cookies on this site

We use cookies to give you a more personalised and efficient online experience. Read more. Cookies allow us to monitor site usage and performance, provide more relevant content, and develop new products. You can accept these cookies by clicking “Accept” or reject them by clicking “Reject”. For more information, please visit our Privacy Notice