ChatGPT has reshaped our interactions with chatbots, setting a new bar for digital assistance and making it easier than ever to build a chatbot for your business. You might have already witnessed its convenience for personal daily tasks. But imagine channeling the power of artificial neural networks and machine translation into your business!

Fine-tuned GPT models can enhance your employees’ performance, boost customer engagement, and become the cornerstone of your business app. When combined with custom chatbot development, these models can deliver unique solutions that address specific business needs, providing a one-of-a-kind user experience.

So, how to train GPT? Our article offers a roadmap for tailoring GPT to your business needs, enriched with practical insights from our generative AI development team.

GPT AI Optimization Case: An Interactive Chatbot

What advantages can a generative pre-trained transformer (GPT) model integration bring to your business? Let us describe it with an example from a solution we developed for a Japanese telecom market player.

Project Background

Our client, a top telecom company in Japan, aimed to build a more solid connection with their customers. So, they decided to launch a new mobile app featuring an appealing cartoon mascot. The character would interact with users to engage, provide entertainment, and share information about the company’s services. All of it is possible with ChatGPT development services.

Our Solution: A Mobile App with a GPT-Based Chatbot

As an AI chatbot development company, CHI Software team created an application that is compatible with web and mobile platforms. Its key feature is a 2D animated mascot that comes to life through an interactive question-answering solution. It’s powered by Natural Language Processing (NLP) and GPT technology.

Chatbot Development Pricing Based on Real Cases

Read more

The friendly character should be versatile in interactions, from detailing the company’s offerings to engaging in casual talk or jokes. Like nurturing a Tamagotchi, users can teach the mascot new words and influence its personality. The pet can also respond to touch, making the experience more interactive and creating a deeper emotional connection.

Among other things, our AI/ML engineering team focused on deep learning concepts and advanced GPT model customization through diverse datasets. Ensuring that our mascot always stays upbeat and maintains a consistently pleasant personality was crucial. Additionally, we armed it with extensive knowledge about the industry and company services.

Project Results: How GPT Fine-Tuning Impacted Business Growth

The app quickly won over the audience thanks to its engaging animated character. After its launch, our client got the following business perspectives:

- Boost in customer satisfaction: The client sees the potential for up to a 15% increase in customer satisfaction thanks to the chatbot’s swift and accurate replies to the user’s input text. This is achieved thanks to the natural language processing of the GPT model. ChatGPT integration services were crucial in seamlessly incorporating this technology to enhance the app’s user experience.

- Greater audience engagement: The client’s goal is to increase user interactions by 20%, enhancing user involvement with the app. To achieve more meaningful and engaging interactions, the chatbot was based on OpenAI’s GPT model. It comes with increased maximum context length, and sentiment analysis of natural language.

ChatGPT Integration for Your Website

Read more

- Higher conversion rates: The client aims for an 8%-10% increase in conversion rates by guiding users in their purchasing process and offering personal recommendations.

- Operational cost reduction: Automating customer service cut operational costs by 20%, resulting in significant financial savings;

- Painless scalability: The question-answering solution can talk to many people at once, helping the business grow by 30% easily while still maintaining its good service.

- Broader customer base: The more natural languages the chatbot can understand, the better your business will be received worldwide. That’s why our chatbot comes with general language understanding and language translation. It allowed our client to expand their reach by 15%, targeting users from various language groups.

Continue reading our case for more details on the project and technologies used.

Interested in AI but want to start small? Let's find out how GPT models can help you

Message our team

Why GPT Models Need Optimization

GPT models are powerful and well-trained. However, they may need further optimization for the model to learn specific tasks and domains. Here are five points to keep in mind:

- Domain specifics: Pre-trained GPT models are generalists. Their knowledge is wide but not necessarily in your area. Tailoring GPT to business needs allows you to teach the model specialized topics so it can understand and generate related content.

- Enhancement in task performance: GPT models can manage many language-related functions, yet their capabilities are limited. Fine-tuned models are experts in learning new data, answering questions, and language translation.

- Training data: When training a GPT model with your text data, the model better understands your business specifics and speaks your language. Effectively utilize training and you will see models effectively increasing their accuracy and relevancy of responses.

- Ethics: Business-focused GPT training process helps you generate content that meets ethical standards and guidelines set on your market.

- Cost and speed: Once you fine-tune a model and it can generate the human-like text, you can run it locally. It will save money on tokens and make the model faster, which is especially handy for question-answering solutions and apps that need quick responses with a fast learning rate.

Fine-tuning GPT for Your Business: How to Make It Right

Now that we know why we use GPT models, let us discuss how to fine-tune GPT for specific business needs.

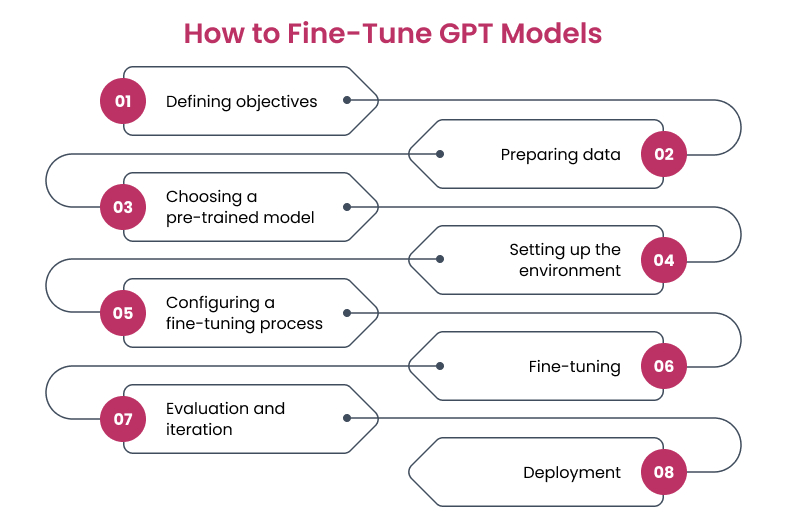

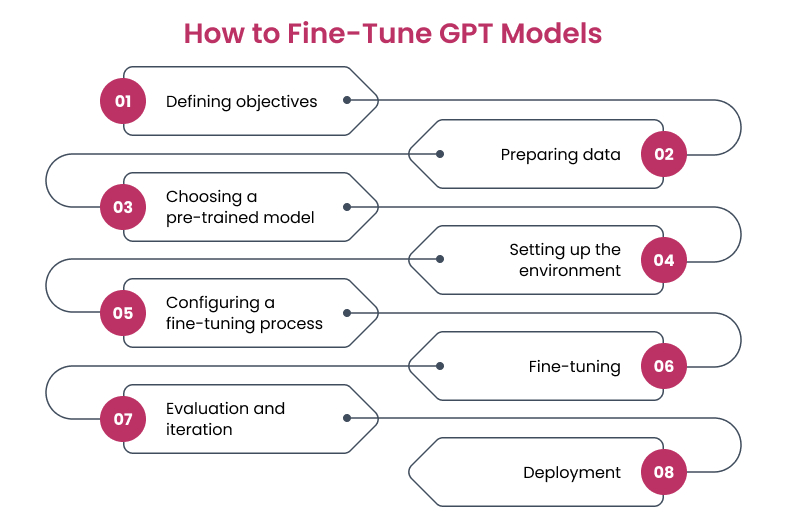

1. Defining Objectives

Before GPT model training and implementation, engineers clearly identify what they want to achieve with the model. It could include improving customer service, generating content, aiding in decision-making, or automating specific tasks. After objectives are set, engineers start to think about basic concepts of how to train a GPT model to generate text.

2. Preparing Data

To train a GPT model effectively, engineers need a high-quality dataset relevant to the task. The training data should be:

- clean from irrelevant and corrupt data;

- formatted as a series of prompts and responses, and as text classification for sentiment analysis;

- divided into sets for training a GPT model, its validation, and testing.

Anomaly Detection with Computer Vision: Enhancing Quality Inspection Across Industries

Read more

3. Choosing a Pre-Trained Model

It is time to select a pre-trained model architecture that is closest to the language model the business needs. To find the right model for virtual assistant development, there are a couple of metrics you should consider:

- Task compatibility: First off, ensure that the pre-trained model is suitable for your task.

- Architecture size: A smaller model is better if resources are scarce, while a larger one is picked for higher performance with enough computational power

- Available implementations: Depending on your framework, some models could have better support and optimization.

- Community resources: Models with large communities around them can have helpful resources that will make the process of fine-tuning easier. For example, tutorials or pre-built scripts.

- Computational resources: Just like with size, smaller models require less computational power compared to larger ones. Consider the resources you have available.

- Performance and speed: You will need to find a model that can balance both speed and performance for your tasks.

Voice Command Revolution: A Step-by-Step Guide to Developing Voice Recognition Apps

Read more

4. Setting Up the Environment

Ensure your developers have access to the necessary hardware to train the model efficiently and install the required machine-learning libraries and dependencies. Having the right tools will help your developers and will provide you with a fine-tuned model much faster.

5. Configuring the Fine-Tuning Process

There are 5 versions of the GPT model in the market at this point. Each of those versions is an improvement on the previous one since they were trained more and include some of the new features. At this point in time, GPT 4 is only started to be adopted and most of the chatbots today are based on GPT 3.5.

Before the training process of the model, AI developers define hyperparameters of the fine-tuning process. The right choice of these settings is important, as they can significantly impact the performance of a machine learning model. Common examples of hyperparameters are the learning rate, number of epochs, or batch size.

6. Fine-Tuning the GPT Model

Finally, it’s time for training GPT models. Here a pre-trained neural network is further trained on specific preprocessed text data. The model learns from the nuances of your training data and adapts its internal parameters to better suit the defined business objectives.

7. Evaluating and Iterating the Model

After the model is fine-tuned, engineers assess the model’s performance using input sequences of a test data set and the evaluation metrics defined earlier. Based on the results, developers may proceed with the deployment or go back and adjust the dataset, vocabulary size, or change the model’s parameters. In some instances, you might even choose a different pre-trained model to start from.

8. Deployment

Once your team is satisfied with the pre-trained model’s performance, they deploy it to a production environment.

The Future Trends of AI Chatbots in 2025 and Beyond

Read more

Conclusion

GPT models are a versatile tool for businesses, with ChatGPT being just a glimpse of their potential. We have covered steps on how to train a GPT model and what you can expect as a result.

Additionally, we made a business-specific GPT customization process look simple for explanation purposes. However, model fine-tuning is more complicated than it seems and requires proven AI expertise in base model training and computational resources. In other words, having a vision and vast training and validation sets to transfer learning, is not enough.

Fortunately, you have found us who is an experienced NLP development company. We at CHI Software are experts in chatbot development and integration. Do you want to know how to train your GPT and are interested in generative AI consulting? Take the first step toward your success by sending us a short request. Finding the right development team is easier than you think. We are right here.

FAQs

-

Can I customize GPT to meet my business needs?

It is possible to customize GPT to meet specific business needs. This process involves fine-tuning a pre-trained GPT model with specific text data and hyperparameters. By doing so, businesses can significantly enhance the relevance and usefulness of custom GPT applications for their domains and operations. For example, you can start leveraging language translation for new data points. Or have better communication thanks to sentiment analysis based on neural networks’ text classification.

-

What is fine-tuning a GPT model?

Fine-tuning a generative pre-trained transformer model means retraining a ready-made transformer architecture to work better for certain business tasks or needs. This is known as GPT model optimization. It is achieved by training GPT models with domain-specific text data, making generative models more relevant and accurate in their responses.

-

Why do businesses need to customize pre-trained GPT models?

Businesses customize pre-trained GPT models to ensure they align with unique operational needs and an industry-specific, human-like text. Custom GPT applications provide targeted and effective use of deep learning technology, enhance user experience, and improve interaction quality.

-

What are the essential steps in fine-tuning GPT models?

The main steps in fine-tuning GPT models require identifying customization goals, data preparation, choosing a GPT model and the right set of hyperparameters for text generation, and setting up the environment. After the GPT model training, it is time to input text, evaluate the results of language generation, and repeat the cycle if needed. The final step is to deploy the model in real-world applications.

-

What do you need to consider when selecting a GPT model for fine-tuning?

When selecting a GPT model to be fine-tuned, it is important to consider its size and complexity, compatibility with your data, data pre-processing efforts, and model architecture. Assessing these aspects helps ensure that the GPT model chosen for optimization is well-suited to meet your demands. A larger model may offer greater capabilities but require significant computational resources to convert words into coherent text, while a smaller one might be more practical but less powerful.

About the author

Olha boasts a decade-long journey in NLP, currently serving as a researcher at Jena University and a Consulting ML/NLP Engineer at CHI Software. Her expertise extends to various realms of NLP, including text summarization, named entity recognition, and keyword extraction. Olha's Ph.D. thesis explored knowledge representations and information retrieval in librarian systems.

Rate this article

23 ratings, average: 4.52 out of 5